Quantum computing has made the same promise for thirty years. Solve problems that classical machines cannot. The hardware kept failing. Errors ran too high. Real use stayed in labs. The latest breakthroughs in quantum computing are changing that. The hard problems are finally being solved. If you follow AI news today, you have seen quantum appear next to machine learning and enterprise AI more often. Businesses cannot ignore it anymore. This article covers what changed, what it means, and what it still does not mean in practice.

How Quantum Computing Works: The Basics Worth Knowing

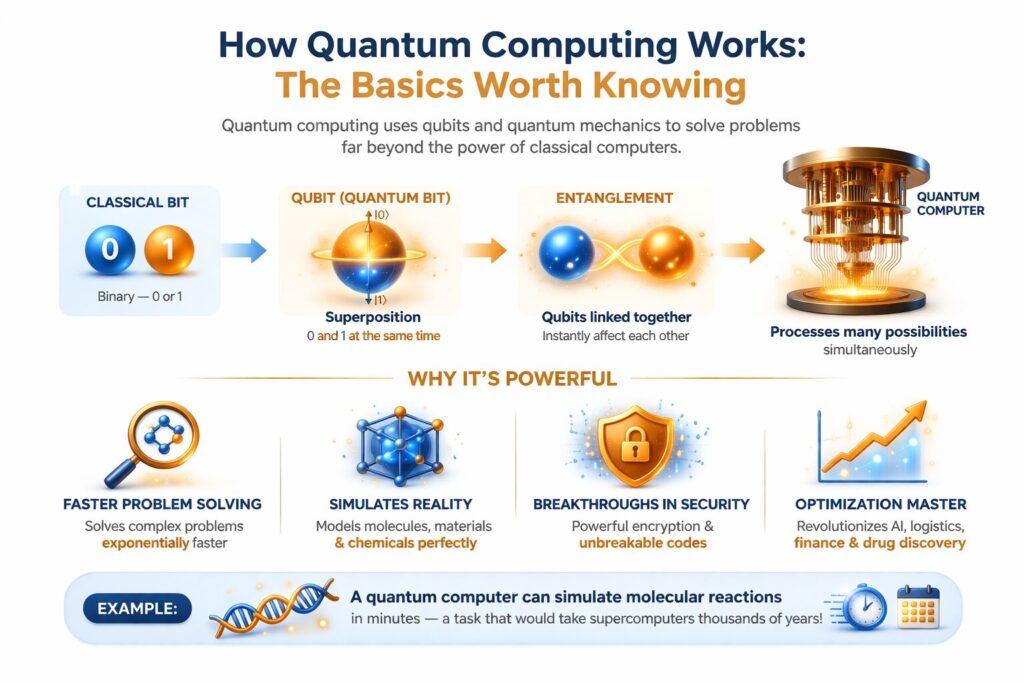

A classical computer stores data as bits. Each bit is a 0 or a 1. A quantum computer uses qubits. A qubit can be 0 and 1 at the same time. This is called superposition. It is a real physical property. It lets a processor check huge numbers of answers at once. Not one by one.

A second property is quantum entanglement. Link two qubits, and changing one changes the other. Distance does not matter. This lets certain algorithms process far more data per step than classical systems can.

Quantum gates are the working parts of a quantum computer. They are the quantum version of logic gates. Chains of gates form quantum circuits. The circuit runs the algorithm. Measuring the qubits at the end gives the result.

The biggest problem is noise. Qubits are sensitive to heat, vibration, and interference. Any disturbance causes decoherence. The qubit loses its state. The calculation breaks. Controlling decoherence is the core problem of quantum hardware. Every other challenge connects back to it.

The Error Correction Problem: Why It Defines Everything

Start here to understand the recent advancements in quantum computing. Quantum error correction is not one milestone among many. Every other milestone depends on it.

Bigger quantum systems used to mean more errors. The fix was to spread data across several physical qubits to form one reliable logical qubit. Errors in individual qubits could be caught and fixed. The computation would not stop.

The problem was the surface code threshold. This is the error rate a system must stay below for correction to work. Below it, more qubits mean fewer errors. Above it, errors grow. No processor had crossed that line at real scale. Then Google’s Willow chip did.

As Willow scaled from code distance three to five to seven, error rates halved at each step. Theory predicted this. Hardware had never shown it before. Google’s Willow paper, published in Nature, underwent peer review. That matters in a field where press releases and published science often differ.

Before Willow, scaling meant more errors. Now it can mean fewer. That is why the latest breakthroughs in quantum computing matter beyond the numbers.

Major Quantum Computing Breakthroughs: What the Leading Processors Achieved

Google Willow

Willow holds 105 superconducting qubits. Gate fidelity hit 99.97% for single-qubit operations. It reached 99.88% for entangling gates and 99.5% for readout. These are among the highest figures ever recorded on a superconducting processor.

On the Random Circuit Sampling benchmark, Willow finished a task that would take classical supercomputers longer than the age of the universe. That is quantum supremacy in concrete form. Not a one-time claim. A growing gap built on an error-corrected architecture.

IBM Heron

IBM’s Heron processor has 156 qubits. It ran 5,000 two-qubit operations accurately. That depth is what real algorithms need. Not benchmarks built to look good.

IBM combined Heron with thousands of classical supercomputer nodes. Together, they simulated the electronic structure of iron-sulfur clusters. They also simulated the triple-bond breaking of molecular nitrogen. Classical machines cannot do this accurately alone. Quantum handles the quantum-scale problem. Classical handles the rest. That split is the working model for the near term.

Microsoft and Quantinuum

Microsoft and Quantinuum built four logical qubits from 30 physical qubits. Error rates came in 800 times lower than baseline. The system ran real-time error detection during computation. It also produced entanglement between logical qubits. Both are required for any fault-tolerant machine to run real algorithms.

Three different hardware teams reached the same milestone. Error-corrected logical qubits work across multiple architectures. That is the result that matters.

The Hardware Landscape: Competing Approaches and Their Tradeoffs

The latest quantum computing discoveries come from several serious teams, not just two.

Superconducting qubits (Google, IBM) run fast. They need cryogenic systems that cool processors to 15 millikelvin. That is colder than deep space. The infrastructure is costly and power-heavy. It does not fit standard data centre economics today.

Trapped-ion systems (IonQ, Quantinuum) hold ions using electromagnetic fields. Laser pulses act as gates. Fidelity runs higher than superconducting systems. Gate speed runs lower. IonQ reached AQ 35. This benchmark measures practical circuit quality, not raw qubit count.

Neutral-atom systems (QuEra, PASQAL) trap atoms with laser arrays. A team from Harvard, MIT, and QuEra built a system controlling 48 logical qubits. That is one of the highest counts on any platform. These systems allow flexible qubit connections. That suits certain algorithms well.

Photonic systems (PsiQuantum, Xanadu) use photons as qubits. They run at room temperature. No cryogenic system needed. The tradeoff is a narrower problem range at the current stage.

Topological qubits (Microsoft) are the most theoretically promising for built-in error resistance. Not yet at commercial scale.

Different platforms will likely lead to different application types. No single approach has won. The diversity across quantum computing technology is a structural advantage for the field.

Quantum Computing Real-World Applications: Where It Is Already Producing Results

Drug discovery and molecular simulation are the strongest near-term uses. Quantum computers model molecular behaviour with the accuracy that classical methods cannot reach. IBM’s iron-sulfur cluster simulation shows this clearly. Electronic structure problems that decide how molecules react are now being computed with quantum mechanics for the first time. Drug companies are funding quantum programmes because the chemistry problem is real.

The link between quantum computing and AI in healthcare is making this faster. Protein structure prediction and personalised medicine are both moving forward. To see how AI already handles complex clinical data, read how an agentic reasoning AI doctor works through patient information. The same hybrid pattern will define quantum-assisted medicine.

Financial optimisation is producing real results. Portfolio construction and risk modelling involve solution spaces too large for classical methods. Published results from financial institutions show return improvements in specific scenarios.

Materials science needs the same simulation ability as in pharma. Battery chemistry, catalyst design, and superconductor research all need quantum-scale modelling. Classical machines handle these poorly.

Quantum computing in AI is active but early. Quantum neural networks and support vector machines are being tested for pattern recognition in medical imaging and fraud detection. Most are hybrid and experimental. The mix of quantum and agentic AI transforming industries points toward autonomous systems using quantum-accelerated computation. Tasks that now take hours of classical processing could run far faster.

Quantum cloud services from IBM, Amazon, and Google have cut the entry barrier. Researchers and smaller businesses can now run quantum algorithms on real hardware. No ownership needed. Software and tooling are growing faster because of access.

One point matters: most quantum computing in real use today is hybrid. Pure quantum execution on complex commercial problems is still on the roadmap.

Post-Quantum Cryptography: The Security Consequences of Quantum Progress

Quantum progress creates a direct security problem. Most coverage understates it.

The threat is Shor’s algorithm. It factors large numbers far faster than any classical method. RSA encryption protects most internet traffic and financial data. It depends on factoring being hard. A capable quantum computer breaks RSA. Elliptic curve cryptography faces the same risk.

Harvest now, decrypt later is already happening. Adversaries collect encrypted data today. They plan to decrypt it once quantum machines are powerful enough. Classified data, financial records, and medical files gathered now could be exposed later. This is a present threat, not a future one.

NIST’s post-quantum cryptography standards are ready to use. ML-KEM handles general encryption. ML-DSA and SLH-DSA handle digital signatures. These came from an eight-year international process. They can be deployed now.

The US government puts the cost of migrating key federal systems at around $7.1 billion over ten years. That tells you the scale every organisation faces. Not just government.

For finance, healthcare, defence, and critical infrastructure, this is not a future task. The window to act is open now and getting shorter.

Which Companies Are Leading Quantum Computing Today?

Google has the strongest hardware credibility. The Willow result was peer-reviewed and independently verified. Among the top quantum computing companies, Google made the most significant single hardware claim.

IBM leads on commercial relevance. Heron’s performance, a public roadmap, cloud access, and real hybrid results make IBM the most grounded major programme in the field.

Microsoft produced the most significant logical qubit result with Quantinuum. Its bet on topological qubits carries the highest risk and the highest potential reward.

IonQ and Quantinuum show trapped-ion systems are fully competitive in terms of fidelity. These are serious platforms.

D-Wave sits in its own category. Quantum annealing, not gate-based computing. Commercial deployments in logistics and materials optimisation are already running.

QuEra and PASQAL lead the neutral-atom space. Their logical qubit results rank among the best on any platform.

Quantum computing innovations are advancing across all hardware types at the same time. No single company is pulling away. That broad progress signals real maturation.

What Quantum Computing Still Cannot Do

Fault-tolerant quantum computing at a commercial scale does not exist. Logical qubit counts range from four to 48 across the best demonstrations. Most useful algorithms need thousands. IBM targets fault-tolerant systems at scale by the early 2030s.

The software gap is real. Hardware is moving faster than algorithms and tools. Writing efficient quantum circuits needs expertise in both quantum physics and software engineering. Few people have both.

Cryogenic infrastructure is a hard constraint. Superconducting processors need temperatures colder than outer space. Running that at a data-centre scale is expensive and not yet viable.

Benchmarking is inconsistent. Qubit count, gate fidelity, quantum volume, and circuit depth all measure different things. One strong metric says nothing about the others. Read every claim carefully.

These limits do not cancel the recent advancements in quantum computing. They give those advancements an honest context.

The Impact of Quantum Computing on Technology: What the Roadmaps Show

Three things will shape the impact of quantum computing on technology more than any single chip. Error correction scaling. Algorithm development. Cryptographic migration.

Google’s next target is a long-lived logical qubit. It aims for a large fault-tolerant machine by the end of the decade. IBM has a public roadmap through the early 2030s, committing to fault-tolerant systems at scale. Microsoft is pursuing topological qubits. The goal is built-in error resistance that removes the overhead surface codes require.

Hybrid quantum-classical systems are the dominant model for the near future. Quantum and classical processors split problems by what each handles best. IBM’s chemistry work with classical supercomputers is the clearest example today. Businesses tracking technology direction should read AI development trends alongside quantum progress. Hybrid systems, specialised hardware, and problem-specific computation are the direction of the next decade. Quantum fits directly into that path.

The latest breakthroughs in quantum computing moved the timeline from speculative to credible. The problems ahead are hard but not scientifically unsolvable. Organisations that act now have an advantage over those still waiting.

Senior SEO Content Marketing Manager at Trendusai.com

Rashida Hanif is a Senior SEO Content Marketing Manager at Trendusai.com, specializing in data-driven content strategy and SEO. She helps brands improve online visibility through keyword research, content planning, and AI-powered marketing insights.