Meta description: Discover how agentic reasoning AI doctors are reshaping diagnosis, clinical decisions, and patient care in 2025 and beyond.

A doctor walks in. Listens. Asks one follow-up. Check the chart. Think through three likely causes. Orders the right test. And when results come back differently than expected, adjust.

That whole loop has needed a human. Until now.

The agentic reasoning AI doctor is not a symptom checker. Most people have already clicked away from those. This is different. These systems don’t pull pre-written answers. They think through a problem. They form a guess. They weigh what fits. And they change their mind when new data shows up.

Healthcare is short on doctors. Drowning in paperwork. Demand keeps growing. Supply can’t keep up. For that kind of system, this isn’t just a software upgrade. It’s a relief at a structural level.

This piece covers how agentic reasoning works in real medical settings, where it’s already running, and what’s really hard about it. If you build health tech, run medical teams, or are deciding whether to bring AI into care delivery, keep reading.

Why Old Medical AI Kept Missing the Point

Hospitals have used AI for years. Imaging tools. EHR dashboards. Decision-support alerts. Good tools, mostly. But they all had the same ceiling.

Legacy medical AI works in one direction. One input goes in, one answer comes out. Done. It doesn’t follow up. It doesn’t notice when the patient’s chart changes the meaning of the symptom. It doesn’t connect the dots between a drug suggestion and the six other medications a patient is already taking.

That’s not a minor flaw. That’s the flaw.

Real medicine is almost never one question with one answer. A 35-year-old runner and a 72-year-old with congestive heart failure can report the same chest tightness. A good doctor knows those are two fully different conversations. Old AI doesn’t. It returns the same flag for both.

The numbers behind all this are grim. In 2024, 43.2% of US doctors reported at least one burnout symptom. Most of them weren’t burned out from seeing patients. They were burned out from charting. Nearly two-thirds said admin tasks and charting were the top drivers. Doctors spend about 90 minutes after their shift ends catching up on EHR entries. They call it “pajama time.” It’s real. It’s common. And it’s documented.

Meanwhile, the supply side keeps shrinking. The Association of American Medical Colleges projects a shortage of 86,000 doctors in the US by 2036. Summarizing medical notes faster doesn’t fix that. Flagging billing codes faster doesn’t fix that.

What fixes it is AI that can actually reason — not pattern-match, not search a database. Reason.

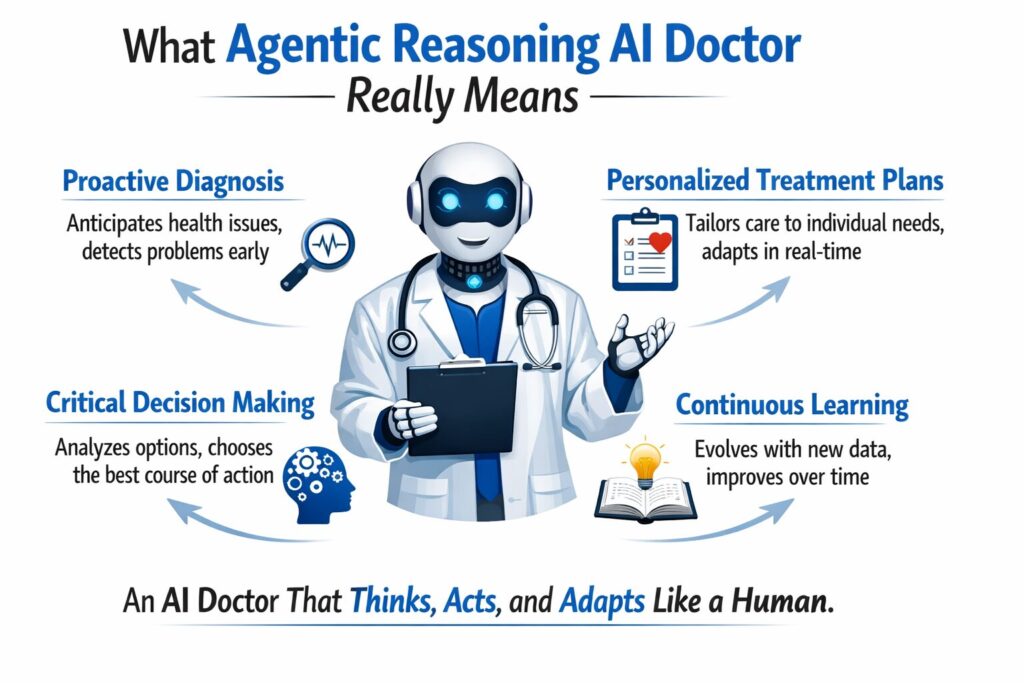

What Agentic Reasoning AI Doctor Really Means

People throw the term around loosely. So here’s what it actually means, plainly.

Agentic means the system has a goal and pursues it without needing to be prompted at every step. It figures out what’s needed next and does it, within limits that humans set.

Reasoning means it doesn’t just retrieve. It weighs options. It considers what fits and what doesn’t. It arrives at an answer through a real thought process.

Stack those two things together. You get an agentic reasoning AI doctor: a system that runs a full patient visit from start to finish. It gives a suggestion. And when things change, it updates that suggestion.

Here’s what that looks like in practice, step by step:

It gathers and builds context

Symptoms, history, labs, imaging notes, wearable readings, past visits. Not just stored — used to build a full picture of this patient, right now.

It runs many hypotheses at once

Instead of picking one answer and stopping, it holds several likely diagnoses open at once. Each one gets weighted against the full context — not just the chief complaint.

It picks the most defensible answer and says why

Not “probably pneumonia.” More like: “Pneumonia is most likely based on these three factors. A chest X-ray would confirm it or point toward these two alternatives instead.”

It updates when things change

New lab results come in. Patient clarifies a detail. A vital sign shifts. The system revises; it doesn’t stay locked to what it concluded 20 minutes ago.

It explains itself in plain language

Patients get told what’s happening and why, in words that make sense. That matters for follow-through.

This is not a chatbot. A chatbot matches keywords to stored answers. This is a system that holds context, tracks changes, and can change its mind. That’s what real clinical reasoning needs.

Real Rollouts: Agentic Reasoning AI Doctor in the Field

Here’s where the proof is.

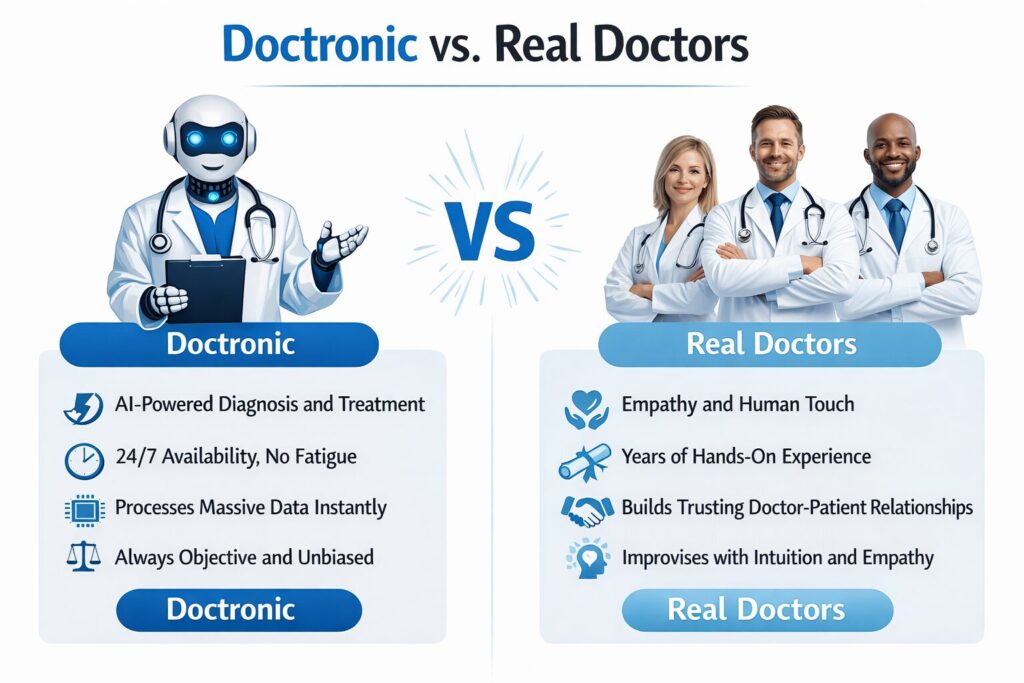

Doctronic vs. Real Doctors

Doctronic built a multi-agent clinical reasoning system. They ran it against real doctors in virtual urgent care. Not fake cases. Actual patient visits, reviewed by blinded experts.

The AI matched the top diagnosis 81% of the time. Treatment plans aligned with doctor calls in 99.2% of cases. And across the entire trial sample, it recorded zero medical false outputs. No made-up diagnoses. No invented drug suggestions.

That last number is the one most people skip past. The biggest fear with medical AI is that it will output something dangerous and state it with full confidence. This trial shows that fear, while fair, doesn’t hold up in purpose-made medical systems. They’re built very differently from general AI tools.

AMIE: Google’s Conversational Medical Agent

Google’s AMIE, Autonomous Medical Interviewing and Evaluation, showed something else. Not just diagnosis accuracy. Full medical conversation, start to finish.

AMIE conducts structured patient interviews. It gathers history, pulls it together, and delivers reasoning on diagnosis and disease management. In blind reviews, it performed at or above the level of primary care doctors in disease management scenarios.

What that shows: autonomous healthcare AI agents can handle the whole patient visit, not just the easy part at the end.

AI Triage in Oncology

Oncology is high stakes. Errors cost lives. Still, agentic AI has moved into treatment planning and triage sorting in pilot hospitals, connected directly with EHR systems. Pilot results showed real cuts in time-to-treatment-suggestion. Oncology teams got faster starting points. Doctors kept final control.

Across all three, the AI isn’t making the final call. It’s doing the cognitive setup so the human can make a better one, faster.

Benefits Most Teams Don’t See Coming

When health systems look at agentic AI, they usually chase the obvious wins. Faster diagnosis. Less charting. The benefits nobody plans for are often worth more.

It doesn’t take days off. Rural clinics, underserved areas, places where the nearest specialist is three hours away, an agentic AI doctor is ready at 2 a.m. on a Sunday. That’s not a minor convenience. In some settings, it’s the difference between care and no care.

Charting gets consistent. Doctors chart differently depending on how tired they are, how busy the shift was, and how much pressure they’re under. AI holds the same standard across every visit. That consistency matters for analytics, for billing accuracy, for quality reporting.

Doctors think better. When a doctor walks in, and the AI has already sorted the patient history, ranked likely causes, and flagged drug conflicts — they don’t carry all that in their head. That mental space goes to the calls that actually need a doctor. McKinsey confirmed this: agentic medical AI does increase direct patient time by absorbing the surrounding work.

Problems show up earlier. These systems pull data all the time, not just at appointment times. A decline pattern shows up in the data stream before the patient calls to say something feels wrong. That’s the foundation of real preventive medicine.

Specialist thinking in primary care settings. A rural GP can get oncology or cardiology-level thinking before they refer a patient. The AI surfaces what a specialist would think about. That changes what gets caught and how fast.

What’s Hard — and What’s Exaggerated

The challenges are real. Some are more real than others.

Regulation hasn’t caught up

The FDA’s frameworks for AI-enabled medical devices weren’t designed for systems that adapt over time. Approval pathways are really unclear in some regions. Rules and oversight timelines move more slowly than tech building. This is a real friction point, not a theoretical one.

Clear thinking matters to doctors

A doctor can’t responsibly act on “the AI said so.” They need to see the thinking — why this diagnosis ranked first, what evidence it’s based on, and what it’s ruling out. Black-box outputs face real resistance from doctors trained to interrogate their own thinking. The good systems surface thinking step by step. The weak ones don’t.

Biased data creates biased AI

Most medical datasets skew toward certain demographics. An AI trained on that data will give better answers for some patients than others. That’s a data problem. It has to be fixed before rollout, not after something goes wrong.

Liability is unsettled

If an AI system contributes to a medical call that harms a patient, who’s accountable? That’s still legally unclear in most places. Until frameworks exist, institutions are cautious about fully autonomous rollout in high-acuity settings. Reasonably so.

What’s overstated

The idea that these systems will routinely make up dangerous medical advice. The Doctronic trial found zero medical false outputs across a rigorous real-world sample. That doesn’t mean false outputs are impossible, it means well-designed clinical reasoning systems work very differently from general-purpose language models. The comparison people make to scare themselves is usually the wrong one.

What the Data Actually Says About Adoption

McKinsey surveyed 150 US healthcare leaders in Q4 2024. Eighty-five percent were already exploring or actively rolling out generative AI. That’s not a fringe trend. That’s most healthcare leaders already moving.

Between 2023 and 2024, job postings for agentic AI roles grew 985%. Companies raised $1.1 billion in equity investment to build these systems. That capital doesn’t flow toward speculation. It flows toward rollout confidence.

McKinsey’s analysts point to one clear near-term area: tasks with right-and-wrong answers. Prior auth. Triage. Medication checks. Revenue cycle. These have checkable outputs. That’s where AI works with the highest accuracy and lowest risk.

The question has shifted. Most health leaders aren’t asking, “Should we use agentic AI?” They’re asking, “How do we do this without getting it wrong?”

What the Best Teams Do That Others Don’t

The health systems actually seeing results share some habits worth knowing.

They start narrow

Pre-visit history summaries. Post-visit charting. Medication checks. They don’t start by giving AI full autonomous control; they pick specific, bounded tasks, prove results and then expand.

They insist on clear thinking from the start

This is not about meeting a compliance requirement. It is about earning physician trust, which determines real-world adoption. If clinicians cannot understand how the system reaches its conclusions, they will not rely on it. And without their confidence and use, even the most accurate model cannot succeed in practice.

They keep humans in the loop on hard cases

The most useful rollouts use AI to prep the medical picture. Then they route complex or high-risk calls to a doctor. Duty stays clear, which leads to high accuracy.

They audit all the time

Medical AI that learns from new data can drift. Best teams build results checks into rollout from day one. Not as an annual review. As an ongoing habit.

They clean their data before they pick their model

The setups with the most reliable AI results fixed their patient data first. The quality of data the AI reasons over determines what it outputs. Poor source data produces poor clinical reasoning, regardless of how good the model is.

Where This Goes From Here

What exists today is early relative to what’s coming.

Multimodal thinking

Current systems handle text, labs, and structured medical data. Next-generation systems will reason across imaging, genomics, wearables, and audio at once. That gives AI a broader picture than any single doctor can hold at once.

Proactive tracking

Right now, AI waits for a patient to arrive with symptoms. That changes. Agents watching data streams will flag a decline before a threshold is crossed. That’s real preventive medicine.

Access in low-resource settings

McKinsey noted that agentic systems can extend specialist-level thinking to places that right now have none. The tech ability is already close. The rollout setup is what’s lagging.

Smarter EHR connection

The next wave of electronic health records AI won’t just log visits. It will pull up patient history on its own. It will flag care gaps in real time. It will update care plans as new evidence comes in.

AI and a doctor working together

AI isn’t a doctor. Instead, they are both working together for better results. AI involvement shows that there is no need for human judgment. So the human can focus on what does.

FAQ

What is an agentic reasoning AI doctor?

It’s a purpose-built AI system that handles multi-step clinical reasoning — gathering patient data, building likely diagnoses, recommending next steps, and revising answers as new data comes in. It doesn’t need a human to prompt every move.

How is it different from traditional medical AI?

Traditional medical AI takes one input and gives one output. Agentic systems hold context across a full patient visit, pursue a goal across many steps, and revise their thinking as things change. One is a lookup. The other thinks.

Is it safe for medical use right now?

Purpose-built clinical reasoning systems have shown near-zero false output rates in real-world trials. Safety depends on system design, what tasks it’s put in place for, and how much doctor oversight is built in. No safe rollout cuts doctors out of high-risk calls.

What are the real barriers to adoption?

Rules and oversight frameworks that haven’t caught up, clear thinking gaps that erode doctor trust, data bias baked into training sets, and unresolved legal risk questions. All solvable, but none of them solve themselves.

Can it handle complex cases involving many body systems?

Current rollouts work best in defined domains, such as urgent care, primary care triage, and oncology support. Multi-system messiness benefits from AI assistance but still needs skilled doctor judgment for final calls.

How does it affect patients?

Faster responses, fewer repeated questions across care touchpoints, and more consistent messaging. Patient satisfaction in AI-assisted visits runs close to human-only care — especially when AI is framed as a support layer, not a replacement.

What should a health system evaluate before rolling out this?

Data setup quality, rules and oversight requirements in your region, whether the system surfaces its thinking, how legal risk will be allocated, and what doctor oversight looks like for high-risk scenarios.

How does machine learning in medicine improve over time?

ML models improve through exposure to new medical data, provided that the data is clean, broad, and not systematically biased. Well-governed systems update their thinking as medical evidence evolves. Static rule-based systems can’t do that.

Senior SEO Content Marketing Manager at Trendusai.com

Rashida Hanif is a Senior SEO Content Marketing Manager at Trendusai.com, specializing in data-driven content strategy and SEO. She helps brands improve online visibility through keyword research, content planning, and AI-powered marketing insights.