The Problem Has Changed: Most Security Teams Haven’t

Agentic AI Pindrop Anonybit is changing how enterprises defend against modern voice fraud. Voice scams once depended on human callers and simple scripts, and most contact-center defenses were built around those older threats. The situation has changed. AI-generated voices now imitate customers with alarming accuracy, while traditional authentication methods struggle to keep pace.

Deepfake fraud attempts in U.S. contact centers increased by more than 1,300% in 2024, rising from about one attempt a month to nearly seven each day. Fraud incidents now strike a contact center roughly every 46 seconds. Synthetic voice attacks have grown sharply across industries, including a 149% rise in banking, 475% in insurance, and over 100% in retail. According to the 2025 Voice Intelligence & Security Report by Pindrop, projected fraud losses could reach $44.5 billion in 2025.

Human detection offers little protection. Studies show people identify audio deepfakes correctly only about 35% of the time. A fake voice does not need to sound flawless. It only needs to stay convincing for half a minute—long enough to reset a password, authorize a transfer, or persuade an agent to unlock an account.

Many security teams still rely on systems designed for an earlier era of fraud. The result leaves contact centers exposed to automated, large-scale attacks. In this guide, we explain how Agentic AI, combined with Pindrop and Anonybit technologies, works to protect sensitive data and prevent voice-based fraud in modern contact centers.

What Agentic AI Actually Means for Fraud

Most explanations of agentic AI stay general. For fraud teams, what matters is how it specifically changes the attack surface in a way that invalidates most existing defenses.

Traditional voice fraud required a human in the loop. Someone had to make the call, follow the script, and adapt when challenged. The attacker’s time, attention, and skill set the hard ceiling on how many attempts could run at once. Agentic AI removes that ceiling entirely. These systems initiate calls on their own, respond to questions in real time, shift their emotional tone mid-conversation, and carry out multi-step tasks like account changes and IVR navigation without any human oversight. They don’t tire. They don’t get flustered. They don’t have a volume limit.

To understand the full scope of how agentic AI is operating across industries right now, the latest agentic AI news covers the business risks teams are encountering firsthand. And if you want to see how autonomous agents are changing enterprise operations beyond fraud, 5 Powerful Ways Agentic AI Is Transforming Industries in 2026 gives a broader picture worth reading alongside this.

Here’s what that looks like in practice. A fraudster deploys an agentic AI agent against a bank’s IVR system at 2 a.m. The agent navigates the automated menu, answers knowledge-based questions using stolen PII, and reaches a live agent. When the agent pushes back, the AI adjusts — polite, calm, persistent. The account is accessed, and no human attacker was ever on the line.

This isn’t a hypothetical. Pindrop’s data shows AI-driven fraud attempts surged 1,210% in 2025, while traditional fraud grew just 195% over the same period. Organizations relying on KBAs and one-time passwords are guarding a locked front door while the window beside it is wide open.

The right question has changed. It’s no longer “Is this voice real?” It’s: “Does this entire interaction behave the way a real human interaction would?” That framing is the foundation of how Agentic AI Pindrop Anonybit operates as a defense architecture, and why it closes gaps that older systems can’t.

Pindrop: Detecting What the Human Ear Misses

Pindrop sits at the detection layer of the stack. Its job is to determine whether a voice is biologically real before any authentication step runs, and that job is considerably harder than it was three years ago.

Modern synthetic voices aren’t low-quality recordings. They’re generated by models trained on thousands of hours of real speech, and they sound natural to the human ear. What they can’t replicate is the physics of human voice production. Lungs, vocal cords, and resonant tissue shape real speech. A processor renders synthesized speech. The differences are measurable, unnatural pauses at the millisecond level, absent background ambience, high-frequency artifacts, call metadata inconsistencies — but none of them are audible to a person on the line.

Pindrop’s platform performs real-time voice anomaly detection across all of these signals simultaneously. It doesn’t wait for a full conversation. Using just two seconds of audio, its Pulse liveness detection determines whether a caller is physically present with up to 99.2% accuracy when paired with voice authentication. In independent testing by NPR, Pindrop outperformed every competitor by 40 percentage points in synthetic audio detection. The platform is trained on more than 1.5 billion real-world interactions annually and backed by over 300 patents.

The proof is in the deployments. HealthEquity, one of the largest HSA administrators in the country, cut voice fraud by more than 90% after deploying Pindrop, with no friction added for legitimate callers. In a separate engagement, a major U.S. health payer used Pindrop Agentic AI voice fraud prevention to detect a coordinated attack targeting 1,200 accounts in real time. Attackers used AI-generated voices to access and modify patient benefits. Pindrop flagged every synthetic voice as it called in, and up to $18 million in potential fraud exposure was prevented. Knowledge-based authentication would not have caught a single call. Seven of the top 10 U.S. banks currently run Pindrop across their contact centers.

For teams evaluating banking voice fraud detection AI or AI voice security for call centers, the case isn’t built on projections. It’s built on outcomes.

Anonybit: The Problem with Storing What Can’t Be Changed

Pindrop catches the attack. Anonybit protects the identity infrastructure that the attack is targeting. These address different failure modes, and both matter.

Most organizations that deploy voice biometrics store the resulting templates in a central database. The logic is straightforward: capture a voiceprint at enrollment, store it securely, and compare it on future calls. The vulnerability is equally straightforward: that database is a high-value target, and a single breach doesn’t expose one account — it exposes every enrolled customer, permanently. A password can be reset. A voiceprint cannot.

Anonybit resolves this through a fundamentally different architectural approach. The moment a biometric is captured, privacy-preserving biometric processing begins. Instead of storing a complete template, Anonybit converts the data into anonymized fragments, anonybits, and distributes them across a multi-party cloud environment. No single server holds a complete record, and no single party can access or reconstruct the original biometric, even when running a match.

Matching itself is distributed across those fragments using Multi-Party Computation (MPC). This cryptographic method allows parties to compute over shared data without exposing their individual inputs to each other. Zero Knowledge Proof (ZKP) adds another layer: it allows one party to verify knowledge of a value without ever revealing the value itself. Together, these make identity verification possible with no central data repository and no single point of failure. Verification returns in 200 milliseconds with greater than 99.999% assurance.

In February 2025, the U.S. Patent and Trademark Office granted Anonybit a patent for this architecture. The platform integrates natively with Microsoft Entra and PingOne DaVinci and supports face, voice, iris, and palm modalities. GDPR, HIPAA, and CCPA compliance follow from the design itself, not from a retrofit applied afterward.

Competitors frame Anonybit Agentic AI biometric security as a storage upgrade. That misses the point. It’s an architectural decision: not a stronger lock on the same vault, but the elimination of the vault entirely.

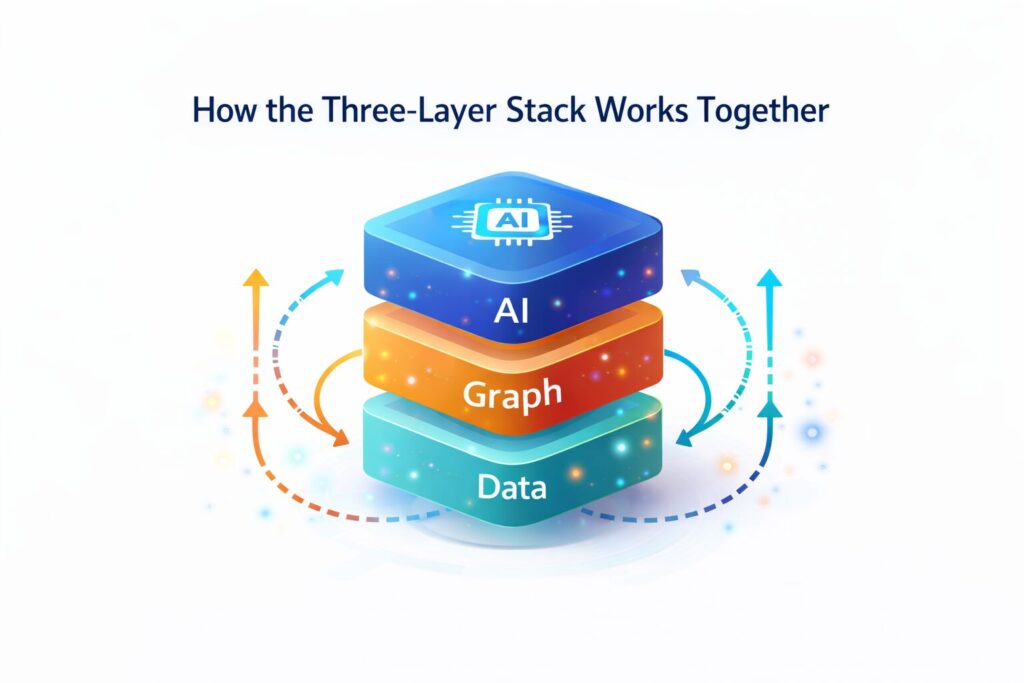

How the Three-Layer Stack Works Together

Understanding each component on its own is useful. Understanding how the three operate together in sequence is what determines whether an organization is actually covered against a live attack.

Here’s the Agentic AI Pindrop Anonybit stack running against a real scenario. A customer calls their bank to authorize a wire transfer. The caller is actually an agentic AI system using a synthetic voice cloned from the account holder’s social media audio.

Layer 1: Sense (Pindrop)

The moment the call connects, acoustic liveness analysis begins. Pindrop’s Pulse technology extracts physical voice signals within the first two seconds, resonance patterns, ambient audio, and millisecond timing gaps that only synthetic speech produces. No action is required from the caller, and no delay is introduced. In this case, the voice fails liveness detection. A deepfake voice pattern recognition alert fires immediately.

Layer 2: Verify (Anonybit)

If the voice had passed liveness, Anonybit would pull biometric fragments from across its distributed nodes, run a distributed match, and return a score, without a complete voiceprint assembled anywhere. Because the call failed at Layer 1, this step doesn’t run for this caller.

Layer 3: Act (Agentic Decision Layer)

Both scores, Pindrop’s liveness result and Anonybit’s identity match probability, flow into the agentic decision-making AI layer. This is not a binary gate. The system reasons against configurable risk thresholds: low-risk calls proceed automatically, medium-risk calls trigger step-up verification, and high-risk calls route to human review. The call is blocked, and the transaction is prevented.

The full cycle completes in under 300 milliseconds. This is real-time voice fraud prevention as a continuous, living loop — not a gate you pass once, not a post-event audit, but a persistent system running behind every call at scale.

Who Needs to Act Right Now

Voice fraud is an enterprise-wide problem, but the exposure is not evenly distributed. Some sectors are absorbing the highest attack volume right now and have the least time to delay.

Banking and financial services carry the most direct risk. Synthetic voice attacks in banking rose 149% in 2024, and the primary targets are high-value transaction authorizations, account detail changes, and SIM swap requests. Seven of the top 10 U.S. banks already use Pindrop. For teams evaluating fintech voice authentication AI or Pindrop Agentic AI voice authentication, the documented ROI exists across deployments at scale.

Healthcare faces a parallel exposure. More than half of fraud attempts in healthcare contact centers now involve AI-generated elements. Synthetic voices, automated bots, and IVR reconnaissance are designed to extract PHI or manipulate patient benefits. HSAs and FSAs are attractive targets because they hold liquid value and are routinely accessed by phone. Pindrop formally expanded into the healthcare sector in February 2026.

HR and recruiting face a newer but fast-growing version of this. Pindrop’s VISR Report documented candidates using AI-generated voice and video to appear as a different person during remote hiring interviews. Pindrop Pulse for Meetings now provides synthetic voice fraud prevention detection inside Zoom, Teams, and Webex in real time.

Insurance recorded the steepest sector-level increase of all — a 475% spike in synthetic voice attacks in 2024 — with attackers impersonating policyholders to file claims, change beneficiaries, and access account values.

Government agencies and regulated industries face both financial and legal exposure simultaneously. The EU AI Act moves into full enforcement in August 2026, requiring organizations using automated systems in interactions with individuals to meet new transparency and risk management standards. Building the stack now is considerably less expensive than building it under regulatory deadline pressure.

What Failure Actually Looks Like

Abstract threat data rarely moves an organization to action. Documented failure patterns do.

The $21 return problem

Pindrop’s 2025 AI Fraud Spike Report documented AI bots deployed against retail contact centers to request small refunds — each one just below the dollar threshold requiring supervisor authorization. Individually, every request looked legitimate. Across thousands of simultaneous calls running in parallel, the losses compounded quickly and invisibly, with no single transaction flagging for review.

Help desk account takeover

Knowledge-based authentication was built for a world where only a real account holder knows their mother’s maiden name or their first childhood address. That data now sells on dark web marketplaces for a few dollars. Agentic AI systems combine purchased PII with AI voice cloning fraud protection bypass techniques to socially engineer help desk agents who believe they’re speaking with a verified customer. SMS-based OTPs offer no additional protection — real-time SIM swap capabilities allow attackers to intercept them before they reach the actual account holder.

Biometric database breach

Any organization storing complete voiceprints in a centralized repository carries permanent exposure. A single breach means every enrolled customer’s biometric identity is gone for good, with no remediation path. Unlike a password, a compromised voiceprint cannot be reissued.

The 2027 plan

The most common organizational response to threat data of this scale is a roadmap with an 18-month timeline. Pindrop’s 2025 report projects retail contact center fraud reaching one attempt in every 56 calls — under stacks that are running right now, today. Waiting is not a neutral decision.

Practical Steps to Get Started

Most organizations don’t need to deploy the full stack on day one. What they need is a clear starting point that addresses the highest-risk exposure before working outward.

Audit your riskiest call flows first

Password resets, account detail changes, SIM swap requests, and high-value transaction authorizations are the four entry points where fraud is most concentrated. Map those specific flows in detail before evaluating any technology against them.

Evaluate liveness detection and voice authentication

Liveness detection answers whether a real human is on the line. Voice authentication answers whether that person matches a stored identity. Running authentication without liveness detection leaves the stack open to synthetic voices that can pass a voiceprint match. Running liveness without authentication leaves enrolled identities unprotected at the storage level.

Train agents with concrete behavioral markers, not general awareness

Telling agents to “watch out for deepfakes” produces no behavior change. Agents who know what synthetic voice cadence sounds like, what the escalation path is when a liveness score returns red, and how to handle a caller who resists additional verification — those agents close the human-layer gap that technology alone can’t fully cover.

Choose an integration path that doesn’t disrupt the existing workflow

Pindrop’s integration with NICE CXone processes calls in real time and pushes a simple red/yellow/green risk signal to agents before they speak. There’s no change to the customer experience, no added call time, and no new process for agents to learn. The information reaches them before the conversation starts, which is exactly when autonomous AI voice fraud blocking is most effective.

Start with one channel, prove the ROI, then expand

A single contact center channel with 60 days of documented fraud reduction numbers makes a significantly stronger internal budget case than projected savings across the full operation.

The Assumption That No Longer Holds

Voice was trusted because it felt human. In 2026, that assumption is gone, and the security posture built on it needs to be rebuilt around what’s actually attacking organizations now.

The question facing security and operations teams isn’t whether to act — it’s whether their response is sophisticated enough to match what’s coming at them. Reviewing flagged calls after the fact, adding an OTP layer, and scheduling annual fraud awareness training are not answers to a system capable of initiating thousands of autonomous calls per hour without a single human involved.

Agentic AI Pindrop Anonybit closes the gap at three distinct layers. Pindrop reads the signal and catches synthetic audio before authentication ever runs. Anonybit protects the biometric data that the attacker is trying to reach, with an architecture that has no central point to breach. The agentic decision layer handles the response — fast enough to block a transaction before it clears, accurate enough to do it without touching a single legitimate caller.

The goal is not a perfect system. The goal is a system that makes AI-powered voice scam prevention slow, expensive, and detectable for attackers, which is sufficient to move them to softer targets. That’s what this stack delivers.

FAQs

What is the difference between Pindrop and traditional voice authentication?

Traditional voice authentication checks whether a caller’s voice matches a stored voiceprint. Pindrop adds liveness detection before authentication runs — confirming whether the voice is biologically produced by a human rather than generated by a machine. This step catches synthetic voice attacks that would otherwise pass a standard voiceprint comparison.

Does Anonybit comply with GDPR and HIPAA?

Yes. Anonybit’s distributed architecture satisfies GDPR’s data minimization and privacy-by-design principles because no complete biometric template is ever stored or reconstructed in a single location. The same structural approach supports HIPAA compliance for healthcare organizations and CCPA compliance for California-regulated entities.

How fast does the Agentic AI layer make a decision?

The full cycle completes in under 300 milliseconds. Pindrop’s liveness detection alone triggers within the first two seconds of call connection.

Can this stack detect deepfake voices in real-time video calls, not just phone calls?

Yes. Pindrop Pulse for Meetings extends real-time synthetic voice and video detection to Zoom, Microsoft Teams, and Webex, covering remote hiring interviews, executive calls, and any high-stakes video interaction where identity impersonation carries risk.