According to McKinsey, AI could add $13 trillion to global GDP by 2030. Every year, billions of dollars are invested in enterprise AI with an aim to improve efficiency, production, and innovation. Companies authorize pilots, hire data scientists, and stand up proofs of concept. Then, most of those projects quietly stall.

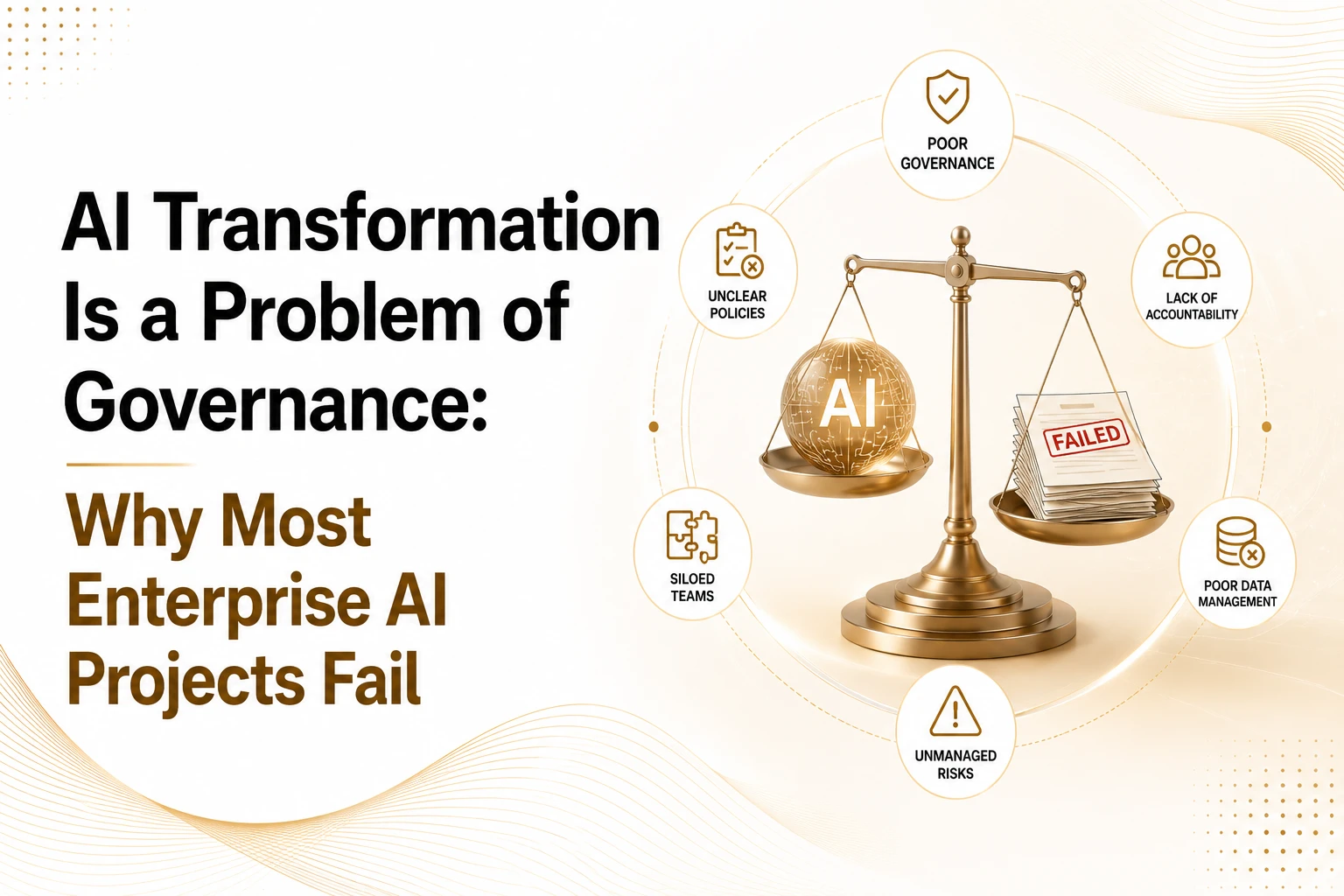

This is not a technology story. AI transformation is a problem of governance. The models work. The computer is available. What’s missing is the institutional infrastructure that determines who owns the outcome, who monitors the system, and who answers when something goes wrong.

RAND Corporation’s 2025 analysis found that 80.3% of AI projects fail to deliver their intended business value, a failure rate more than twice that of conventional IT projects. MIT Project NANDA (July 2025) reported that 95% of organizations deploying generative AI saw zero measurable impact on profit and loss. These are not marginal shortfalls. They point to a structural problem that no model upgrade will fix.

This article explains where that structure breaks down, what failure looks like in practice, and what it actually takes to govern AI at scale, starting from the premise that AI transformation is a problem of governance, not a problem of technology.

Why Better Technology Is the Wrong Response to AI Failure

The most common executive response to an AI project that stalls is to question the technology, switch vendors, upgrade the model, or hire a different team. It is almost always the wrong diagnosis.

McKinsey’s State of AI 2024 found that 72% of enterprises had AI running in production, but only 9% described their governance as mature. That gap, widespread deployment, and minimal oversight, is where the failures concentrate. The same research found that only 28% of CEOs take direct accountability for AI governance, and only 17% of boards formally own it.

That means AI systems that make pricing decisions, screen job applicants, flag insurance claims, and influence clinical recommendations are running within organizations where no one has formally accepted responsibility for what they produce. When those systems generate errors, the response is improvised because no process was designed for it.

Organizations that have governed ERP rollouts, data migrations, and cloud transitions with careful change management are deploying AI models with a vendor contract and a Slack channel. The institutional discipline is there. It has simply not been applied to AI.

The distinction matters. AI transformation is a problem of governance, not capability. It is an organizational challenge. Building the right structure requires the same kind of sustained institutional attention that any high-stakes operational change requires.

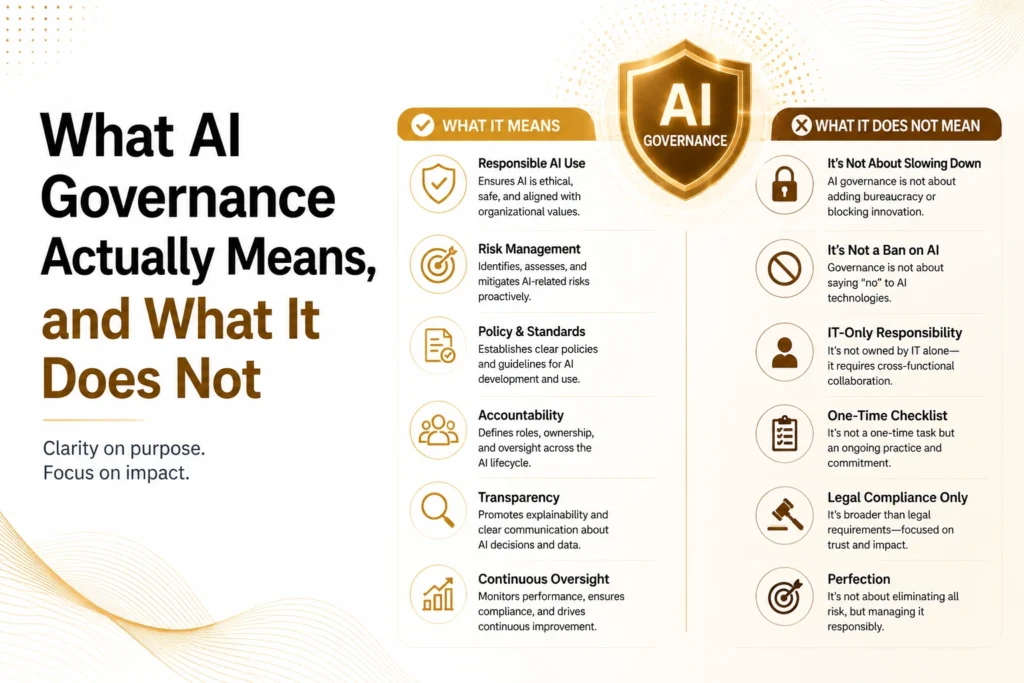

What AI Governance Actually Means, and What It Does Not

AI transformation is a problem of governance, and governance is not a policy document filed with legal documents. It is not an ethics committee that meets quarterly. It is the set of operational structures that determine how an AI system gets approved, deployed, monitored, and retired, and who is accountable at each stage.

Three things are required for governance to function.

Decision rights: Who has formal authority to approve or block an AI system, and what documentation that authority requires. Without this, deployment decisions are made by whoever has the loudest voice or the shortest deadline.

Escalation and accountability: What happens when an AI system produces a harmful or unexpected output? Who is notified, on what timeline, and what actions follow? Organizations without this structure respond to AI incidents the way they respond to any other crisis: reactively, expensively, and inconsistently.

Ongoing monitoring: How the organization knows, on a sustained basis, that its AI systems are still working as intended, not just technical monitoring of uptime and latency, but governance monitoring of output equity, error rates, and model drift.

The distinction between governance and compliance is worth drawing explicitly. Compliance means satisfying a regulator. Governance is the internal accountability structure that would exist even if no regulator were watching. Organizations that conflate the two tend to build governance that satisfies audits without preventing failures.

A particularly common variant is what might be called governance theatre: named AI ethics committees with no enforcement authority, responsible AI principles that no team was trained to apply, and risk assessments completed once and never revisited. The paperwork exists. The infrastructure does not. Most organizations are somewhere in this gap, not ungoverned exactly, but not governing in any meaningful operational sense either.

The Five Failure Modes Killing Enterprise AI

Across large-scale AI developments, AI transformation as a problem of governance shows up in recognizable patterns. These five failure modes appear repeatedly, in organizations of different sizes and sectors, and they explain most of what the research identifies as AI project failure.

1. The Accountability Void

An AI system goes live. It makes decisions that affect customers, employees, or partners. Then it produces a consequential error, a discriminatory output, a flawed recommendation, or a compliance violation.

The question that follows is simple: who is accountable? The answer, in most organizations, is no one specific. The technology team approved the architecture. The business unit approved the use case. Legal reviewed the data privacy terms. Compliance signed off on the policy. Each sign-off covered a slice of the decision. No one accepted accountability for the outcome as a whole.

Distributed accountability is functionally identical to no accountability when something goes wrong. This is the accountability void, the clearest expression of why AI transformation is a problem of governance. The fix is structural: a named executive who has formally accepted responsibility for each high-impact AI system.

2. The Decision Rights Vacuum

AI systems routinely operate in domains where humans previously made decisions, such as credit approvals, hiring recommendations, diagnostic flags, and content moderation. In most organizations, no one has explicitly resolved whether the AI recommendation is binding, advisory, or something in between.

The result is that different employees resolve the question differently. One manager overrides the AI on instinct. Another treats it as binding. A third escalates to a committee that has no charter to handle it. The inconsistency creates operational risk and legal exposure simultaneously, because the organization cannot demonstrate uniform application of its own policies.

Every AI system that influences material decisions needs a documented decision authority protocol specifying exactly when the AI recommendation leads, when human override applies, and what documentation is required either way.

3. The Regulatory Surprise

An AI system has been running in production for eighteen months. A governance review, triggered by an external audit, an acquisition, or a regulatory inquiry, finds that the system qualifies as high-risk under the EU AI Act. It requires a conformity assessment, quality management documentation under Article 17, bias testing records, and human oversight mechanisms. None of these were built into the system at design time.

Retrofitting compliance into a live system is not impossible. It costs roughly three to five times what proactive compliance would have cost, and it takes time the organization may not have, which is exactly why AI transformation is a problem of governance that must be resolved before deployment, not after. The August 2, 2026, enforcement deadline for the EU AI Act Commission powers is not a planning date. It is a date from which the European Commission can investigate, issue binding orders, and impose financial penalties.

Regulatory mapping belongs in the system design phase. By the time a model is in production, the architecture that would satisfy compliance obligations is either there or it is not.

4. The Monitoring Mirage

An organization has monitoring in place. Engineers watch uptime, latency, and accuracy against holdout test sets. The dashboards look healthy. But no one is tracking the distribution of AI outputs across demographic groups, the rate at which AI recommendations result in consequential errors, or how far the live data distribution has drifted from the training data.

The organization believes it is watching its AI. It is watching the wrong things. Model drift is invisible to technical monitoring. Demographic disparity in outcomes is invisible to technical monitoring. The system appears to be working until the damage is substantial enough to surface through a complaint, a lawsuit, or a press report.

Governance monitoring requires its own specification, written alongside the technical monitoring plan at deployment time, which outlines output patterns that trigger review, what thresholds authorize human escalation, and who is responsible for acting on the signals.

5. The Orphaned System

The team that built the AI model has moved to a new project. The system is still running in production. No one has actively managed it in fourteen months. The training data is now two years old. The business context the system was designed for has changed. No one owns it.

This failure mode causes the largest downstream harm, not the visible incident at launch, but the slow, unmonitored degradation of a production system over the years. Gartner’s 2025 research found that organizations with high AI maturity keep initiatives active and governed for at least three years; lower-maturity organizations rarely sustain governance past the initial deployment.

Every production AI system needs a named current owner with documented responsibility for monitoring, retraining decisions, and retirement.

Shadow AI: The Governance Signal Organizations Are Reading Wrong

Seventy-eight percent of AI users report bringing personal AI tools into the workplace without organizational approval, according to a 2025 industry survey. The instinctive response in most organizations is to treat this as a security incident, identify unauthorized tools, block access, and enforce the policy.

That response misreads the signal.

Shadow AI is a governance design failure and a direct illustration of why AI transformation is a problem of governance. When employees route around official processes to use unapproved tools, they are telling the organization something specific: the governed alternative is too slow, too restrictive, or does not exist. The governance system created the incentive to bypass it.

Every unauthorized tool a team adopts represents a real operational need that official governance failed to meet. Organizations that respond with enforcement miss the diagnostic information. The right response is structural: understand why the approved process was insufficient, then fix it so that working within governance is easier than working around it.

EU AI Act and the Regulatory Timeline That Has Changed the Calculation

The governance conversation now has a legal deadline attached to it. For organizations still treating AI transformation as a problem of governance only in theory, the EU AI Act has made it a matter of law.The penalty structure for the most severe violations reaches €35 million or 7% of global annual turnover. For organizations tracking the regulatory pace, staying current with AI news today is no longer background reading — it is compliance work.

| Regulatory Milestone | Date | Consequence |

| EU AI Act prohibited practices | February 2, 2025 | In effect. Fines up to €35M or 7% of global turnover |

| GPAI provider obligations | August 2, 2025 | In effect. Non-compliant providers face Commission investigation |

| Commission enforcement powers | August 2, 2026 | Active now. Investigations, binding orders, and fines authorized |

| High-risk AI full compliance | August 2, 2027 | All high-risk systems in production must be fully compliant |

| ISO/IEC 42001 | No mandate date | Fortune 500 procurement and insurance underwriters now require it |

| NIST AI RMF | No mandate date | De facto requirement for US federal contractors |

| US state AI laws (9 states active, Q2 2026) | Varies | Divergent definitions of algorithmic discrimination require state-by-state mapping |

Sources: EU AI Act official text; NIST AI RMF 1.0; ISO/IEC 42001; Stanford HAI 2025 AI Index

The penalty structure for the most severe EU AI Act violations, prohibited AI practices, reaches €35 million or 7% of global annual turnover, whichever is higher. For a company with €1 billion in revenue, that is a potential exposure of €70 million. That figure reframes the governance investment conversation entirely.

ISO/IEC 42001, published in December 2023, provides the most direct operational pathway to EU AI Act compliance, particularly for the Article 17 quality management obligations that apply to high-risk AI providers. The NIST AI Risk Management Framework covers the US side, not legally required, but treated as a baseline in most federal and regulated industry procurement processes.

Organizations that have not yet classified their AI systems under the EU AI Act risk tiers are not simply behind on a compliance task. They are operating without the foundational information required to assess their own regulatory exposure.

How AI Governance Differs Across Industries

AI transformation as a problem of governance is not sector-neutral, and the sector determines which governance layer fails first.

Financial services organizations typically have model risk frameworks inherited from statistical modeling, codified in guidance like the US Federal Reserve’s SR 11-7. The governance structures exist, but they were calibrated for deterministic models, not generative AI. The accountability infrastructure is present; the risk parameters do not match the new technology profile.

Healthcare organizations face a different problem. Human-in-the-loop requirements are not philosophical preferences; they are patient safety obligations. An AI diagnostic tool operating without adequate human oversight is not a governance gap in the abstract; it is a clinical risk. Governance must integrate with clinical workflow at the point of care, not sit above it in a compliance document.

Public sector organizations are particularly prone to the Orphaned System failure mode. Government AI systems frequently outlast the political mandate or funding cycle that created them. No one revisits the original objectives. No one assesses whether the system still serves them. The system continues to make decisions that affect citizens, with no one actively governing it.

For teams building or advising on enterprise AI strategy, the governance approach must be designed for the sector, not against a generic framework that assumes uniform risk profiles.

What Governance-Mature Organizations Actually Do

RAND’s research identifies fewer than 20% of organizations as actually delivering on their AI transformation objectives. Those who have accepted a core operating principle: AI transformation is a problem of governance, and governance is a structural investment, not a compliance formality. The patterns that distinguish that minority are consistent across research sources.

Governance precedes deployment. In organizations that succeed, governance design is a development constraint. Risk tier classification, decision rights documentation, escalation protocols, and accountability assignments are completed before the model is built. This is how mature organizations treat security. AI governance belongs in the same category.

Business ownership is explicit and written. Technology teams build systems. Business units own outcomes. In governance-mature organizations, that distinction is documented. The business owner has formally accepted accountability for the system, including the accountability that activates when something goes wrong.

Governance monitoring is separate from technical monitoring. Engineering tracks uptime and accuracy. Governance tracks output equity, consequential error rates, human override rates, and compliance with decision rights protocols. These are different instruments, reporting to different stakeholders. Conflating them is how organizations develop the illusion of oversight without its substance.

Boards receive structured governance reporting. Not technology roadmaps or project updates, a specific governance report: how many AI systems are in production, what their risk classifications are, what the current compliance status is against regulatory deadlines, and what material incidents occurred since the last report. Boards that govern AI well have built the reporting infrastructure to do it. They are not governed by instinct.

For organizations exploring what mature AI governance services look like in practice, the pattern is always the same: governance as a structural capability, not a compliance function. Businesses developing long-term AI frameworks can also benefit from understanding how to choose the right AI development company for sustained growth and production.

A Practical Starting Point, Prioritized by Risk, Not Perfection

Most organizations do not need a full governance transformation before their next deployment. They need to identify the weakest layer and move it first. Recognizing that AI transformation is a problem of governance, not a technology deficit, is the prerequisite for knowing where to start.

If AI systems operate in the EU without risk classification under the EU AI Act, that is the immediate priority. The August 2026 enforcement window is open. Risk classification is the prerequisite for every other compliance step, and it is achievable in four to eight weeks for most production system inventories.

If high-impact AI systems have no named accountable executive, start at the accountability layer. The decision rights vacuum, the monitoring mirage, and the regulatory surprise are all compounded by the accountability void. Fixing accountability first creates the organizational anchor for everything else.

If monitoring is in place but only covers technical metrics, add a governance monitoring specification that includes output equity metrics, consequential error rates, and drift-detection signals, alongside the existing technical dashboards. This is achievable in parallel with current operations without requiring a framework overhaul.

If governance policies exist but are not consistently applied, the problem is usually decision rights, protocols that exist on paper but were never operationalized. The fix is an audit of actual decision behavior against documented protocols, not a rewrite of the policy.

Closing

The models are not the problem. The models are available, capable, and increasingly affordable. What separates organizations that realize sustainable value from those that accumulate expensive failures is what surrounds those models: the accountability structure, the decision rights, the escalation protocols, the regulatory compliance posture, and the ongoing ownership of systems that make real decisions about real people.

AI transformation is a problem of governance. It has been a governance problem from the first enterprise deployment, and the regulatory environment has now made the cost of ignoring that fact legally quantifiable. The organizations that are succeeding are not the ones with the best technology. They are the ones who built the institutional infrastructure to govern it.

Businesses preparing for enterprise-wide adoption should also stay informed about AI development trends in 2026, shaping governance, automation, and digital transformation strategies.

That infrastructure does not require perfection. It requires knowing who is responsible, what happens when something goes wrong, and how the organization demonstrates both to a regulator, a board, or the customers affected by the decision. Building that starts with an honest assessment of which governance layer is missing, and fixing it before the next deployment, not after.

FAQs

What is shadow AI, and why does it indicate a governance failure?

Shadow AI refers to employees using unapproved AI tools outside official organizational channels. It is a signal that official governance failed to meet real operational needs. When more employees use unauthorized tools than authorized ones, the governance design needs to be fixed.

What are the key EU AI Act deadlines for 2026?

The European Commission’s enforcement powers were activated on August 2, 2026. From that date, the Commission can investigate organizations, issue binding orders, and impose fines. Penalties for the most severe violations reach €35 million or 7% of global annual turnover. High-risk AI systems require full compliance by August 2, 2027.

What is ISO/IEC 42001?

ISO/IEC 42001 is the international standard for AI Management Systems, published in December 2023. It provides a certifiable governance framework that maps directly to EU AI Act Article 17 quality management obligations. It is not legally mandated, but enterprise procurement processes and insurance underwriters increasingly require it for vendor qualification.

What is the NIST AI Risk Management Framework?

The NIST AI RMF (published January 2023) is a voluntary US framework for AI risk identification, assessment, and management. It defines four core functions: Govern, Map, Measure, and Manage. It is the de facto baseline for US federal contractors and regulated industries.

How is AI governance different from AI ethics?

AI ethics defines principles, fairness, transparency, and non-discrimination. AI governance is the operational infrastructure that determines whether those principles are actually applied. Ethics without governance is intention without enforcement.

How long does it take to implement AI governance?

For organizations building from a low baseline, a functional framework covering accountability, decision rights, escalation, regulatory compliance, and monitoring takes three to six months to design and six to twelve months to embed operationally. EU AI Act risk classification for an existing production system inventory typically takes four to eight weeks with dedicated focus.

Senior SEO Content Marketing Manager at Trendusai.com

Rashida Hanif is a Senior SEO Content Marketing Manager at Trendusai.com, specializing in data-driven content strategy and SEO. She helps brands improve online visibility through keyword research, content planning, and AI-powered marketing insights.